The landscape of Enterprise Learning and Development (L&D) is currently defined by a widening "Content Gap." While the demand for high-quality, video-first training has scaled exponentially, the production cycles for such content remain tethered to legacy workflows: high-cost studios, manual scriptwriting, and fragmented post-production.

For CXOs and L&D leaders, the challenge isn't just creating content rather it's content velocity. How do you deploy expert-level training at the speed of business transformation?

The typical answer has been AI video generation tools. But these tools introduce their own friction: you write a lesson in your LMS, then copy that text into a third-party platform, configure an avatar, generate the video, download the file, and upload it back. The content still lives in two places, and every update requires the same manual loop.

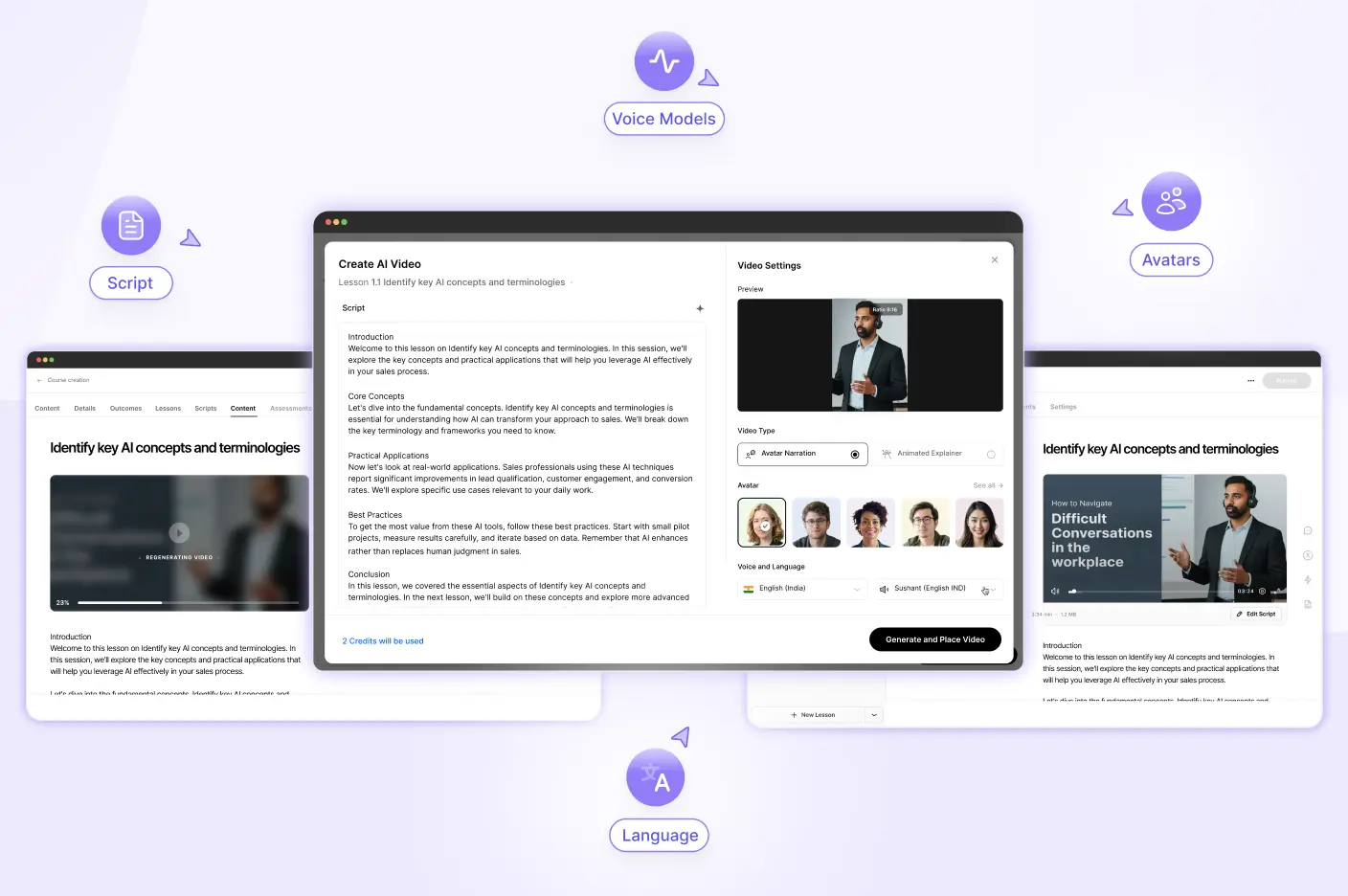

At Lyearn, we asked a different question: what if the lesson itself was the input? What if your AI-powered LMS could generate the video directly from that content without requiring export, reformatting, or re-import? That principle is the foundation of our latest architectural milestone: AI video generation built directly into the course authoring workflow. Your course content, even in rough draft form, becomes the direct input for professional video synthesis without ever leaving the authoring environment.

The Problem with Fragmented Video Production

Historically, creating a video-based course required an inefficient "swivel-chair" process. Instructional designers would write a lesson in an LMS, copy that text into an external AI video generator, spend hours tweaking an avatar, export the MP4, and finally re-upload it back into the lesson.

This fragmentation results in contextual drift. The video exists as a siloed asset, disconnected from the pedagogical flow of the lesson. Furthermore, it creates a massive "Technical Debt" for L&D teams: if the underlying technical documentation or company policy changes, the video becomes instantly obsolete, requiring a total rebuild in a third-party application.

Lyearn's AI-powered LMS eliminates this friction by bringing AI video generation directly into the lesson builder. By leveraging the lesson's native context, our system ensures that every frame of video is technically and tonally aligned with the surrounding curriculum.

Architectural Deep Dive: The In-Situ Generation Pipeline

To the end-user, the process is seamless. To the technical leader, it is a sophisticated orchestration of LLMs (Large Language Models) and video synthesis engines working in a secure, unified environment. Here is the technical breakdown of the Lyearn AI video pipeline.

1. Contextual Grounding and Script Synthesis

Most AI video generators require a manual prompt or a "cold" script. Lyearn changes the input vector through a process we call Contextual Grounding. When an admin initiates video creation within a lesson, our AI parses the existing lesson text, learning objectives, and metadata.

Our AI doesn’t just summarize your text; it intelligently converts it into a structured, conversational script. You always have the final say, that is, the script is fully editable, allowing you to refine the message or add your own personal touch before moving to production.

Here's what makes this different: you don't need fully polished lesson content to start. Drop in a rough idea, a few bullet points, or even a brief outline of what the video should cover. The AI will structure it into a proper, instructionally sound script the same way it would with a complete lesson. This removes the assumption that you need finished content before you can generate video.

2. Choosing the Right Format for Your Content

- Avatar Narration (Live): Instead of hiring actors or booking studio time, this mode uses hyper-realistic digital personas that mimic human micro-expressions and natural speech patterns. It is the ideal choice for high-stakes communication such as executive briefings, soft-skills training, or global compliance updates. By putting a "real" face to the information, you increase learner accountability and retention through social presence, making it feel like a 1-on-1 coaching session rather than a static presentation.

- Animated Explainer (Coming Soon): Some technical concepts like data architecture, supply chain flows, or internal software logic are difficult to explain with a talking head alone. This mode transforms your lesson text into a visual storyboard, using icons, flowcharts, and kinetic typography to guide the learner's eye through complex processes. This is the "how-it-works" engine of your LXP.

3. High-Fidelity Persona Synthesis

Once the script is finalized, the platform moves into the configuration phase. This is where Lyearn’s AI-powered course creation capabilities provide enterprise-grade customization:

- Avatar Selection: A diverse library of hyper-realistic digital personas.

- Voice and Phonetics: Fine-tuned speech synthesis that supports multiple languages and dialects, ensuring global scalability.

- Environmental Logic: Selection of background colors and video orientations (Landscape for desktop-optimized learning or Portrait for mobile-first workforce engagement).

Instructional Integrity: Reducing Cognitive Load

From a technical instructional design perspective, the goal of video is to reduce "Extraneous Cognitive Load." When a learner has to read a massive wall of text while watching an unrelated video, their ability to retain information plummets.

Lyearn’s AI course creation ensures Instructional Integrity by forcing a tight correlation between the visual and the textual. Because the AI "understands" the lesson, the resulting video acts as a reinforcement tool rather than a distraction. The avatar provides the "Social Agency" needed to keep learners engaged, while the platform handles the technical delivery.

The Strategic ROI: Beyond Production Costs

While reducing the "cost per minute" of video is a clear benefit, the true ROI is found in Organizational Agility.

- Accelerated Speed-to-Market: Traditional video production creates a lag between a business change and the corresponding training. With an integrated AI pipeline, your learning materials can pivot as fast as your strategy. Whether it’s a new product launch or a sudden policy update, you can deploy professional-grade video in minutes, not weeks.

- Scalability for Every Department: High-quality video has traditionally been reserved for "high-priority" courses due to budget constraints. By removing the cost of studios and actors, you can now provide engaging, video-first learning for every niche department and technical process across the organization, regardless of their specific budget.

- Consolidated Technical Infrastructure: Managing multiple licenses for video editing software, AI synthesis tools, and external storage creates technical bloat and security risks. Bringing these capabilities into a single, secure LMS environment simplifies your tech stack, reduces administrative overhead, and ensures that your internal data never leaves your protected ecosystem.

- Maintenance Velocity and Version Control: Because videos are generated natively within the LMS, updates happen at the speed of a text edit. When a policy changes or product documentation shifts, simply revise the lesson text and regenerate the video in seconds, not days. The platform maintains automatic synchronization between your lesson content and the corresponding video, eliminating the risk of outdated visual assets contradicting current written materials.

Looking Ahead: The Evolution of the Active Author

The integration of AI video is the first step toward Active Authoring, where the role of an LMS expands from storage to creation.

- Automated Visuals: Future modules like the Animation Explainer will likely turn raw technical documentation into dynamic, motion-graphic-led lessons automatically.

- Proactive Maintenance: Systems will eventually analyze engagement data or source changes to suggest or even draft necessary video updates.

- Hyper-Personalization: Video content may soon be tailored in real-time to the experience level or language of the individual learner.

Lead the Transformation

Lyearn's In-Situ AI Video Generation doesn't just save time. It fundamentally changes what's possible when your LMS understands your content as deeply as you do. By treating lesson context as the input layer for video synthesis, we've eliminated the export-import cycle, the contextual drift, and the technical debt that plague traditional video workflows.

The result: L&D teams can focus on learning strategy while the platform handles production logistics. Your courses don't just contain videos. They generate them, natively, from the content you're already building.

Ready to see the future of AI-powered course creation in action?

Request a Demo | Explore our AI Capabilities